"Dead Synchronicity is one of the most disturbing games I've ever played." I took a break from reviewing Dead Synchronicity to tweet that sentiment out the other day, and it's still the easiest way I've found to summarize the game. It's upsetting. It's psychological horror on a very real, unsettling level.

It's...a point-and-click adventure game. Yeah, not exactly the genre I expected to be deeply unsettled by. But it's true—Dead Synchronicity is horrifying.

Warning: Disturbing content follows.

You play the part of Michael—or at the very least, you think your name is Michael. You don't really know. You wake up in a trailer with amnesia, a condition that's become so normal you're referred to by your caretaker as a "blankhead," with sympathy rather than derision.

The world ended—not by way of nuclear weapons, or aliens, or epidemics, or any of the other means humanity tried to predict. Instead, an enormous gash opened up in the sky and entire cities were destroyed by what survivors are calling "The Great Wave." In the aftermath of the Great Wave, the military's moved in to re-establish order and prevent looting.

There's martial law. There are curfews. There are men with guns in the streets. Anyone caught outside after dark is shipped off to a prison "refugee" camp on the outskirts of town. This is where you, a man without a name, come in.

It's a bleak, albeit not wholly original, set-up. What makes Dead Synchronicity stand out is the fact that the game doesn't immediately scrap this intro tone and make you a freedom-fighter, hero for the oppressed. Instead, you're just a normal guy trying to survive and figure out what the hell happened to you—by whatever means necessary.

People tend to make a furor over games like Mortal Kombat or Postal 2 because they're violence-as-display. They're graphic. You can't help but wince as a muscle-bound dude takes two sharp knives to his eyeballs, for instance.

Taken in another light though, something like Mortal Kombat is absurd. It's watching cartoon characters fight—like watching an anvil fall on Wile E. Coyote and crush him flat. "Oof, that's gotta hurt," you say, but you know the character's coming back for the next sketch. It's silly!

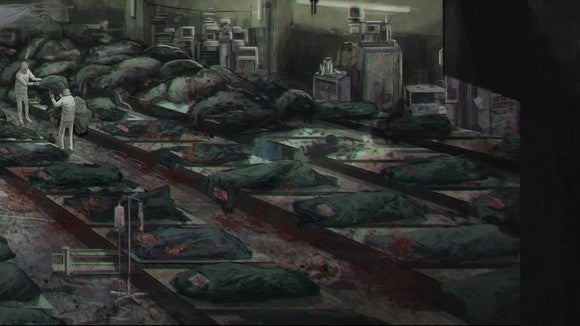

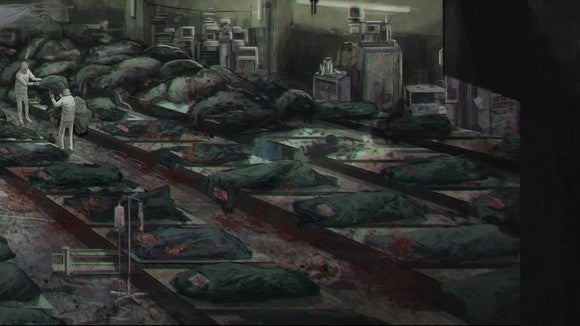

Dead Synchronicity, by contrast, is understated in its violence. That's not to say it's never graphic. The art is often a glimpse into hell, such as the aptly-named "Suicide Park."

It reminds me a lot of Gerald Scarfe's animations for The Wall, to be honest:

But the art is surface-level horror. There's a deeper, more existential dismay to be found in Dead Synchronicity—a level of "Wait, you want me to do what to solve this puzzle?" Then you do whatever unspeakable thing the game wants you to do, and your character immediately starts scrutinizing his own actions. "Is a good deed is still good if done for the right reasons, regardless of if others are harmed in the fallout?" or to put it another way, "Do the ends justify the means?"

It's beginner's philosophy, for sure, but the bar is so low in video games that even something like Dead Synchronicity feels like an interesting exploration, especially since it's often your own actions that come under scrutiny. It's the same "Do you enjoy violence?" themes explored in Hotline Miami and Spec Ops: The Line without being quite as hamfisted about it.

Which makes it a shame I can't unabashedly recommend the game. I'm torn over Dead Synchronicity. I've spent the last week alternatively being awed by its story ambitions and hating playing it.

Key to my ire is the fact that this is a point-and-click adventure. Now, if you've read my reviews in the past you know I tend to fall on the side of "point-and-clicks are great because they have a lot of story flexibility, but the puzzles tend to be ridiculous."

In Dead Synchronicity, the puzzles are as apt to give you nightmares as the story. It's not that the puzzles are particularly unfair. In fact, Dead Synchronicity is better than most at sticking to logical, real-life uses for objects—pry open a door with a crowbar, or tie a rope around a tree to get down a steep hill.

That makes some of the failures in logic even more noticeable, though. For instance, midway through the game you'll remove a manhole grate from a sewer. Your character will only climb down partway though before saying something like, "I can't go down there without a light."

There's an oil lamp in the first room of the game. You cannot take this oil lamp. Your character flat-out refuses, and not because it's theft but because it would "make the room too dark." A room you have no intention of coming back to. A room with a door to the outside world, which light could shine through.

Or we can discuss prying open the door with the crowbar. The door in question is attached to an abandoned car with (as far as I can tell) no windows. Why do I need to pry the door open to get at the two items inside when I could just crawl through the window? Or, if there are windows, break them with a rock?

Dead Synchronicity also has a tendency to give you tunnel vision, whether on purpose or not. You'll find a camera, for instance. You know exactly where the camera needs to be used. You'll walk across six maps to get there and then..."There's no film in this camera." Are you kidding me? Okay. So you start looking for film.

The problem? You can't find film yet. You need to solve some other, less pressing puzzles first before you'll magically find the room that has the film in it. There's no indication of this though, so you're likely to start wandering in circles, convinced there must be film. It has to be here somewhere. I'm just not looking hard enough!

And all this—everything—would be sort of forgivable in the "Well, it's a point and click adventure" way, except that the game just ends partway through. This is the biggest sin of Dead Synchronicity, and it turned me from loving the game to feeling cheated by it.

Dead Synchronicity feels like it's all Act One. Your character (remember: he has amnesia) finally learns one tiny piece of what's going on in this world and...credits. There's no big climactic moment or catharsis. It's an enormous cliffhanger, and for me it only took about five hours to get there. I never knock a game's length, as long as it accomplishes what it's trying to accomplish. I don't think Dead Synchronicity does. It's just arbitrarily over.

The ending came so suddenly I literally emailed the developers asking if perhaps our review build was broken. Had they given us an extended demo build by accident? Nope, that was the ending. It's a real shame, because the game up to that point is extremely interesting. I just felt burned, like I'd invested a portion of myself into something only to have it yanked away.

I suspect the lack of a true resolution has to do with the fact this was a Kickstarter project—I could see a small studio running out of time/money and saying "We need to wrap this up." Regardless, it cheats what could've been one of my favorite point-and-clicks this year.

As I said, I'm mixed on Dead Synchronicity. I'd love to see more games take this sort of "adult" approach to point-and-clicks. The '90s had quite a few, a la I Have No Mouth and I Must Scream, and it's amazing how dark you can make what's typically seen as a family-friendly genre these days.

But those puzzles. But that ending. Those are the phrases that keep running through my brain, even as I mull over the positives. Knowing my own frustration, it makes it hard to recommend that experience to anyone else (let alone tack a score on the game).

My only hope is if you do pick up the game, you're suitably warned about its pitfalls. Maybe that will help you better appreciate what's there, without being blindsided by its failings.

Satechi

Satechi